How fast is fast enough? A discussion on computer efficiency.

When interacting with computers, we want them to work fast. After typing 18/3, we expect the answer - 6 - to be outputted right away. When texting a friend, we expect the messages to be delivered nearly instantaneously. But how do we quantify just how fast is fast enough? This is where efficiency and complexity come into play.

How do we describe algorithm speed?

When computer algorithms are developed, they are described in terms of their complexity. Complexity gives us a way to quantify the speed at which the computer will solve a problem relative to the size of the input to that problem. Mathematically, the complexity is expressed in terms of O(n) where n represents the size of the problem input.

What does it mean to be efficient?

An algorithm is efficient if the complexity is polynomial. For instance, problems that take O(n^8) time are polynomial and therefore considered efficient. If you are unfamiliar with Big-O notation or what it means for a function to be polynomial, we will provide a brief definition below.

The Big-O notation showed above, where the letter O proceeds a function, is used to express the complexity of an algorithm in a simplified form, without including leading constants and smaller factors. For example 5n and n are both O(n), as the big-O.

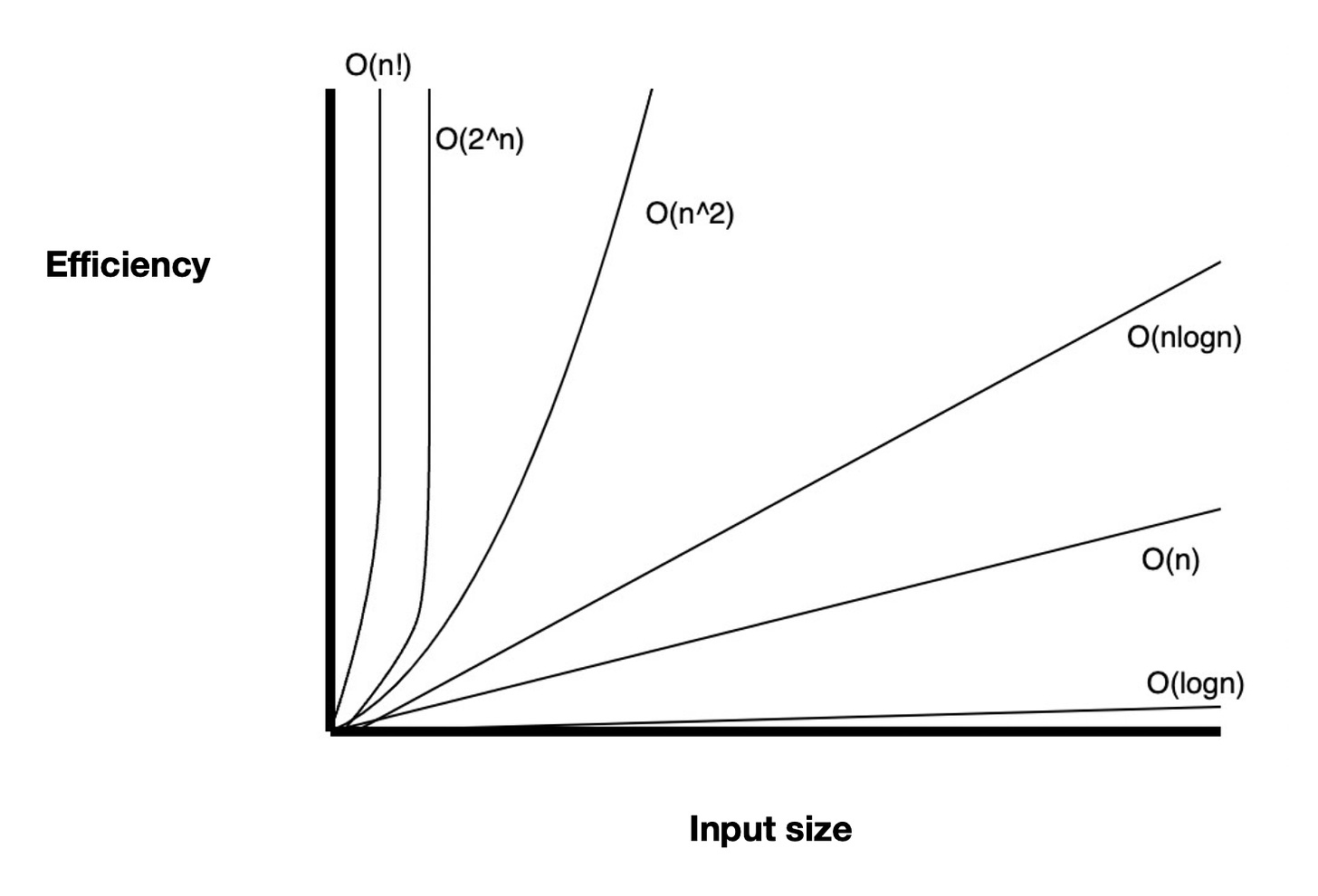

A function is polynomial if it is O(n^c) where c is a constant. If c is a variable, then the function becomes exponential and ultimately has a longer runtime than a polynomial function. The image below demonstrates that functions greater than polynomial functions have larger efficiencies as the input size grows.

Efficiency translates to the problem being something a computer is capable of solving in a realistic amount of time with respect to the input size. Relative to the size of the input, the time the computer requires to solve the problem scales polynomially - so we expect the solution will be relatively quick to reach.

Inefficient algorithms are those with runtimes that grow faster than polynomial runtimes. For instance, exponential runtimes eclipse polynomial runtimes as n grows, making them inefficient. Although inefficient algorithms give us a solution to problems eventually, the time they require to be solved can become far too large to be realistically usable. With large input sizes, inefficient algorithms can require years or even lifetimes of compute power to solve.

We want to create efficient algorithms so that regardless of what the user inputs, the computer is able to provide the solution relatively quickly.

How does efficiency apply to computer operations?

To make efficiency clear, we’ll walk through a quick example. Say a computer takes in some number, x, and prints that number x to the screen. Although this appears to be a simple operation, we can dive a little deeper into the complexity of this problem and whether or not it can be solved efficiently.

If we want to print a number to the screen, we print each digit of the number sequentially. Therefore the amount of print operations the computer will do is equal to the amount of digits the number consists of. For example, printing the number 54 requires 2 prints while the number 68682 requires 5 prints.

Here we can observe a relationship between the size of our input and the complexity of the operation. Specifically, we see that the complexity grows linearly with the size of the input. Mathematically, this linear relationship is represented as O(n). Since the complexity is polynomial, we can conclude a print operation like this one is efficient.

A counter example of a print operation that would not be efficient is one where each combination of an input is printed. For example, if 54 is the input, the computer would print 5 followed by 54, requiring 3 prints. Alternatively for 68682 we would print 6, 68, 686, 6868, 68682, requiring 15 prints.

In this case the relationship between the size of our input and the complexity of the operation is O(n^n), as we will print n unique combinations, and each combination can be at most n digits long. Since the complexity is exponential, we can conclude a print operation like this one is inefficient.

Why does efficiency matter?

Software engineers consider efficiency when writing code and designing systems in order to improve the end user experience. With attention spans dwindling, and the dependency on technology increasing, it is essential that software products provide fast results.

A tangible example of this for many software engineers is APIs. An API is an application interface that receives requests, runs backend calls and computations, and returns a response to the user. Engineering the backend calls and computations to be efficient is crucial to create an API that returns a response in a relatively fast amount of time relative to the API input.

APIs can be used for just about anything. Regardless of the API use case, it is important to consider the API efficiency as this impacts the end customer experience.

Conclusion

To have a standard for speed, developers describe algorithms in terms of their complexity. Ultimately, complexity determines whether an algorithm is efficient or not. This in turn impacts the feasibility of an algorithm. Society expects technology to work fast, and these definitions help developers determine when algorithms are fast enough.